Kafka use cases: Real-World patterns for streaming data

Discover essential kafka use cases and how real-time streaming powers analytics, data pipelines, and event-driven architectures.

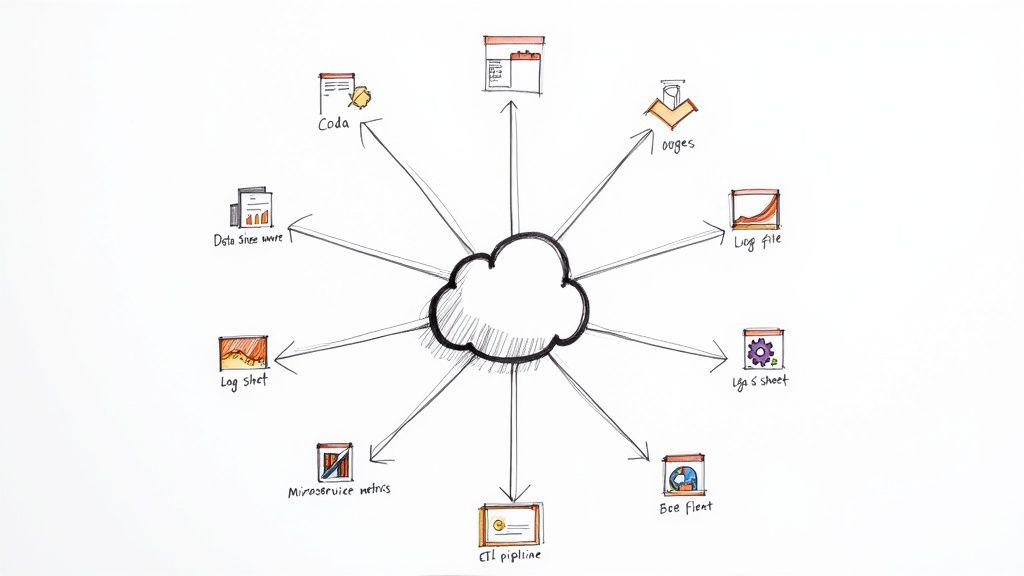

In the world of real-time data, Apache Kafka has become the definitive backbone for countless modern applications. While its reputation for high-throughput, scalable messaging is well-known, understanding its practical applications is key to unlocking its true potential. Moving beyond theory, the real value lies in seeing how this technology solves specific, complex business problems.

This article dives deep into 10 essential Kafka use cases that are actively shaping industries today. We’ll move past surface-level descriptions to provide a strategic breakdown of each scenario, complete with architectural patterns, tactical advice, and actionable takeaways. Our goal is to equip you with a replicable blueprint for each application, helping you understand not just what Kafka can do, but how to implement these patterns effectively.

You will learn how to structure data pipelines for log aggregation, build event-driven architectures for microservices, and implement real-time analytics for fraud detection. We will also critically evaluate when a self-managed Kafka deployment makes sense versus when leveraging a managed service like Streamkap can accelerate your projects while significantly reducing operational overhead. Let’s explore the concrete ways Kafka is enabling everything from instant financial alerts to hyper-personalized user experiences, providing you with the insights needed to apply these powerful concepts to your own systems.

1.

Real-Time Event Streaming

At its core, Apache Kafka excels as a high-throughput, distributed streaming platform, making real-time event streaming one of its most fundamental and powerful use cases. This pattern involves treating business activities not as static data but as a continuous flow of events. Kafka acts as the central nervous system, ingesting event streams from countless sources like user clicks, IoT sensor readings, or financial transactions, and making them available for immediate consumption.

This allows organizations to decouple data producers from consumers. A single event, like a “user adds item to cart,” can be published once to a Kafka topic and then consumed independently by multiple systems: a real-time analytics dashboard, a fraud detection engine, and an inventory management service, all without impacting each other.

Strategic Breakdown

Companies like Netflix and Uber have perfected this approach. Netflix uses event streaming to process billions of user interaction events daily, feeding its recommendation engine in near real-time. Uber leverages Kafka to process location data from millions of drivers and riders, enabling dynamic pricing, route optimization, and ETA calculations. These examples showcase how Kafka use cases transform operations from batch-oriented to event-driven.

Key Insight:

The power isn’t just in handling high volume, but in enabling a “subscribe once, read many” architecture.

This allows new applications and services to tap into existing event streams without requiring changes to the original

- When an event occurs, like a customer placing an order, the

Order Servicepublishes anOrderCreatedevent to a Kafka topic. TheInventory Service,Shipping Service, andNotification Servicecan all subscribe to this topic and react independently. This decouples the services; theOrder Servicedoesn’t need to know which downstream systems exist or if they are even online. This pattern is one of the most transformative Kafka use cases for building robust, distributed systems.

Strategic Breakdown

Companies like Airbnb and Booking.com leverage Kafka to orchestrate complex workflows across their many microservices. For instance, a new booking event on Booking.com can trigger updates in availability, pricing, and user profile systems simultaneously, all without direct service-to-service calls. This asynchronous flow improves system resilience; if the notification service is temporarily down, booking events are retained in Kafka and processed once the service recovers, ensuring no data is lost.

Key Insight:

Kafka acts as a durable event log, not just a message bus. This allows services to be taken offline for maintenance or to be scaled independently without disrupting the entire system.

It also enables new services to be introduced that can replay the entire history of events to build their state.

Actionable Takeaways

To build a robust event-driven microservices architecture, consider these tactics:

-

Define Clear Domain Events: Use a schema registry to enforce well-defined, versioned event contracts. This prevents communication breakdowns as services and their event structures evolve over time.

-

Design for Idempotency: Consumers may process the same event more than once due to network issues or retries. Design your consumer logic to be idempotent, ensuring that reprocessing an event doesn’t cause duplicate data or incorrect state changes.

-

Implement Distributed Tracing: Use correlation IDs within event headers to trace a single user request across multiple services. This is crucial for debugging and monitoring complex, asynchronous workflows. You can learn more about how services read and write messages directly with Kafka to implement these patterns.

6.

IoT Data Ingestion and Processing

Kafka is engineered to handle the relentless, high-volume data streams generated by Internet of Things (IoT) deployments. It acts as a highly scalable and fault-tolerant buffer, ingesting massive amounts of telemetry from sensors and connected devices. This provides a robust pipeline for capturing, processing, and routing device data for real-time monitoring, predictive maintenance, and large-scale analytics.

The challenge with IoT is not just the volume but also the sporadic and often unreliable nature of network connections. Kafka’s durability ensures that sensor data is not lost, even if downstream processing systems are temporarily unavailable. It decouples data ingestion from processing, allowing millions of devices to publish data without overwhelming the analytics infrastructure.

Strategic Breakdown

Connected car platforms from manufacturers like Tesla and BMW exemplify this pattern. They stream terabytes of diagnostic and operational data from their vehicle fleets to Kafka topics. This data feeds everything from real-time vehicle health dashboards for service centers to long-term R&D models for improving battery performance and autonomous driving algorithms. Similarly, companies like John Deere use Kafka to process data from agricultural equipment, optimizing crop yields and machinery uptime.

Key Insight:

Kafka transforms IoT from a simple data collection problem into a real-time event-driven ecosystem. By using

Kafka as a central bus, a single temperature reading from a factory machine can trigger an immediate alert, be stored for historical trend analysis, and feed a predictive maintenance model simultaneously.

Actionable Takeaways

To build a resilient IoT data pipeline with Kafka, consider these tactics:

-

Bridge Edge Protocols: Use an MQTT bridge (like the Kafka Connect MQTT source connector) to translate lightweight device protocols into Kafka messages. This allows constrained devices to communicate efficiently while leveraging Kafka’s power on the backend.

-

Partition by

device_id: Partitioning your topics by a unique device identifier is critical. It guarantees that all messages from a single sensor or device are processed in the order they were sent, which is essential for accurate state tracking and time-series analysis. -

Compress at the Source: Implement message compression (like Snappy or Gzip) on the device or edge gateway before sending data to Kafka. This significantly reduces network bandwidth requirements and storage costs, which is crucial in large-scale IoT deployments.

7.

Real-Time User Personalization and Recommendations

Kafka is the engine behind modern recommendation systems that adapt instantly to user behavior. By capturing a continuous stream of user actions like clicks, views, purchases, and ratings, Kafka enables machine learning pipelines to process this data in real-time. This allows systems to generate fresh, highly relevant recommendations that dramatically improve user engagement and conversion.

This approach moves beyond static, batch-processed recommendations. An event, such as a user viewing a specific product, is published to a Kafka topic. This event is then consumed by various services that update user profiles, retrain models, and push updated recommendations to the user interface, often within seconds. This creates a dynamic and responsive user experience.

Strategic Breakdown

Companies like Spotify and Netflix have built their entire user experience around this principle. Spotify streams listening data to update personalized playlists like “Discover Weekly” and “Daily Mix” in near real-time. Netflix processes viewing habits to constantly refine its recommendation rows, suggesting content that aligns with a user’s most recent activity. These Kafka use cases demonstrate how to transform user interaction data into immediate value.

Key Insight:

The true advantage is the ability to weight recent behavior more heavily.

By using stream processing with techniques like sliding windows, systems can infer a user’s current intent, leading to recommendations that feel intuitive and timely rather than being based on stale historical data.

Actionable Takeaways

To build an effective real-time personalization engine, consider these tactics:

-

Enrich Event Streams: Capture not just the action but also rich context. Include metadata like device type, location, and time of day in your Kafka events to build more sophisticated user profiles.

-

Leverage a Feature Store: Use a feature store that can be updated by Kafka consumers. This provides ML models with low-latency access to the latest user features without querying large databases.

-

Monitor Recommendation Diversity: Track metrics to ensure your algorithms don’t create “filter bubbles” by over-specializing recommendations. Introduce logic to ensure a healthy mix of familiar and discovery-oriented suggestions.

Financial

Services and Fraud Detection

In, the financial sector, where milliseconds can mean the difference between a secure transaction and significant loss, Kafka serves as a critical infrastructure for real-time fraud detection. This use case involves streaming immense volumes of financial events, such as credit card swipes, wire transfers, and login attempts, through a central pipeline. These events are then fed into complex event processing (CEP) engines and machine learning models to identify anomalies and fraudulent patterns instantly.

This architecture allows financial institutions to move from reactive, batch-based fraud analysis to a proactive, real-time defense mechanism. A single payment event is published to a Kafka topic and can be consumed simultaneously by a fraud scoring engine, a transaction ledger, and a customer notification service, ensuring a coordinated and immediate response to potential threats.

Strategic Breakdown

Companies like PayPal and Stripe have built their fraud prevention systems around Kafka’s capabilities. PayPal processes trillions of dollars in payments annually, using Kafka to stream transaction data through its risk models in real-time, enabling it to score and block fraudulent payments before they are completed. Similarly, Stripe leverages Kafka to analyze payment signals from millions of businesses, training its machine learning models to adapt to new fraud vectors as they emerge. These prominent Kafka use cases demonstrate the platform’s ability to provide the speed and reliability required for mission-critical financial operations.

Key Insight: Kafka’s immutable, ordered log is crucial for financial systems.

It provides a verifiable and auditable trail of every transaction and event, which is essential for regulatory compliance (like PCI-DSS) and for training more accurate fraud detection models by replaying historical data.

Actionable Takeaways

To build a robust, real-time fraud detection system with Kafka, consider these points:

-

Prioritize Security: Implement end-to-end encryption (TLS) for data in transit and use Kafka ACLs to control access to sensitive topics. Consider application-level encryption for personally identifiable information (PII).

-

Design for Low Latency: Optimize producer and consumer configurations for sub-second processing. Use techniques like message batching and efficient serialization formats (e.g., Avro) to minimize latency.

-

Create a Model Feedback Loop: After a fraud model makes a prediction, stream the outcome (e.g., confirmed fraud, false positive) back into another Kafka topic. This allows for continuous, real-time model retraining and improvement.

9.

Website Activity Tracking and User Analytics

One of the most common and impactful Kafka use cases is tracking website and mobile application activity. By treating user interactions like clicks, page views, and form submissions as a continuous stream of events, Kafka provides the scalable backbone needed to ingest massive volumes of clickstream data. This data is then funneled to analytics platforms, real-time dashboards, and machine learning models to understand user journeys, measure engagement, and personalize experiences.

This approach allows engineering teams to capture every granular user action without overwhelming application databases. The event-driven architecture means a single “click” event can be sent to a Kafka topic and consumed simultaneously by an A/B testing framework, a user sessionization service, and a long-term data warehouse, all operating independently.

Strategic Breakdown

Companies like Airbnb and Slack leverage this pattern extensively. Airbnb’s event pipeline processes user behavior data to power its search ranking algorithms and personalize recommendations. Slack’s analytics platform relies on Kafka to ingest billions of daily events from its applications, providing insights into feature usage and team collaboration patterns. These examples demonstrate how Kafka enables>learn more about Change Data Capture and its strategic importance.

Key Insight: CDC with

Kafka transforms a database from a passive repository into an active event source.

This unlocks the value trapped in legacy systems, enabling real-time analytics, data synchronization, and event-driven architectures without requiring risky and expensive modifications to the source system itself.

Actionable Takeaways

To successfully implement a CDC pipeline with Kafka, consider these points:

-

Use a Dedicated CDC Tool: Leverage robust, purpose-built tools like Debezium. These connectors are designed to handle the complexities of reading transaction logs from various databases (e.g., PostgreSQL, MySQL, MongoDB) and converting them into structured Kafka events.

-

Handle Schema Evolution: Databases schemas change. Use a Schema Registry to manage schema versions for your Kafka topics. This prevents data pipeline failures when a column is added or modified in the source table.

-

Monitor Replication Lag: Closely monitor the lag between a change occurring in the source database and it appearing in Kafka. Significant lag can undermine the “real-time” value of your pipeline and may indicate performance bottlenecks in the connector or source system.

Comparison of 10 Kafka Use Cases

Use caseImplementation complexityResource requirementsExpected outcomesIdeal use casesKey advantagesReal-Time Event StreamingMedium–High (partitioning, schemas, consumer groups)High (broker clusters, network, storage)Low-latency delivery, event replay, ordered streamsReal-time analytics, alerts, activity feeds, IoT eventsDecouples producers/consumers; high throughput; replay & orderingData Pipeline and ETL ProcessingHigh (orchestration, transforms, schema evolution)High (compute for transforms, connectors, storage)Reliable, scalable ETL to DW/lake; data lineageData warehousing, batch+stream ETL, multi-destination syncScales to petabytes; supports hybrid processing; separation of concernsLog Aggregation and AnalysisLow–Medium (ingest, formatting, retention policies)High (long-term storage, indexing integrations)Centralized durable logs; real-time search & alertsCentralized logging, security monitoring, operational debuggingSingle source of truth; real-time anomaly detection; cost-effective vs legacyReal-Time Analytics and BIHigh (stream processing, state management)High (continuous processing compute, state stores)Immediate metrics, dashboards, alerts; sliding-window analyticsLive dashboards, anomaly detection, operational BIFaster insights; continuous aggregation; enables>Streamkap to learn more.

Related resources

Do AI Agents Need Kafka? When Managed Streaming Makes More Sense

AI agents need real-time event streams, but that doesn't mean you need to run Kafka yourself. Learn when self-managed Kafka makes sense for agent workloads and when a managed streaming platform is the better choice.

Database Replication Patterns: Active-Active, CDC, and Beyond

A practical guide to database replication patterns — active-passive, active-active, CDC-based, snapshot, and multi-region. When to use each and common pitfalls.

What Is Real-Time Data? The Engineer's Guide to Sub-Second Pipelines

Everything you need to know about real-time data — what it is, how it works, CDC vs polling, architecture patterns, and how to build sub-second pipelines.