Change Data Capture (CDC) Tutorial: Get Started in Minutes

Streamkap Team

December 15, 2024

TL;DR

CDC captures database changes in real-time, enabling low-latency data synchronization without impacting source database performance.

Table of Contents

What is Change Data Capture? Why Use CDC? How Streamkap Implements CDC Getting StartedWhat is Change Data Capture?

Change Data Capture (CDC) is a set of software design patterns used to determine and track data that has changed in a database. Instead of periodically querying for all data, CDC captures only the changes (inserts, updates, deletes) as they happen.

Why Use CDC?

Traditional batch ETL processes have several limitations:

-

High latency - Data can be hours or days old

-

Database load - Full table scans put strain on source databases

-

Missed changes - Updates between batch runs can be lost

CDC solves these problems by:

1. Capturing changes in real-time as they occur

Minimizing database impact by reading transaction logs 3.

Ensuring data completeness with ordered, exactly-once delivery

How Streamkap Implements CDC

Streamkap uses Debezium connectors to read database transaction logs (WAL for PostgreSQL, binlog for MySQL) and stream changes to your destinations in real-time.

Supported Sources

-

PostgreSQL

-

MySQL

-

MongoDB

-

SQL Server

-

Oracle

Supported Destinations

-

Snowflake

-

Databricks

-

ClickHouse

-

BigQuery

-

Kafka

Getting Started

1. Sign up for a free Streamkap account

2. Connect your source database

3. Configure your destination data warehouse

4. Start streaming in minutes

Ready to get started? Sign up for free and start syncing your data in real-time.

Streamkap Team

LinkedInAuthor Bio

The Streamkap team builds modern real-time data infrastructure to help companies sync data between systems.

Published

December 15, 2024

TL;DR

CDC captures database changes in real-time, enabling low-latency data synchronization without impacting source database performance.

Related blog posts

Batch Processing vs Real-Time Stream Processing

There is a big movement underway in the migration from batch ETL to real-time streaming ETL but what does that mean? How do these methods compare? While real-time data streaming has many advantages over batch processing, it is not always the right choice depending on the use case so let's take a loo

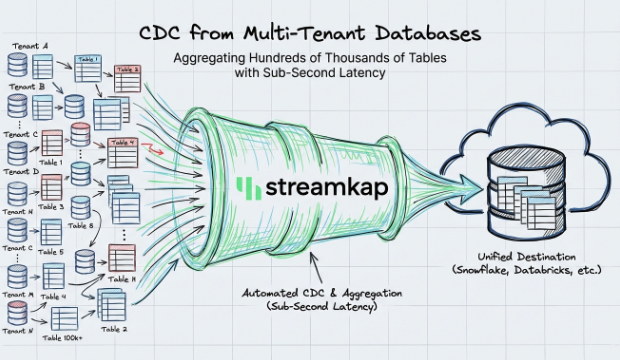

CDC from Multi-Tenant Databases with Sub-Second Latency

How Streamkap handles CDC at scale across multi-tenant databases with thousands of schemas, delivering sub-second latency without managing Kafka or Flink.

Change Data Capture for Streaming ETL

Change Data Capture refers to the process of capturing changes made to data in a source system such as a database, so that these change events can be used in the destination system, such as a data warehouse, data lake, data app, machine learning models, indexes, or caches.